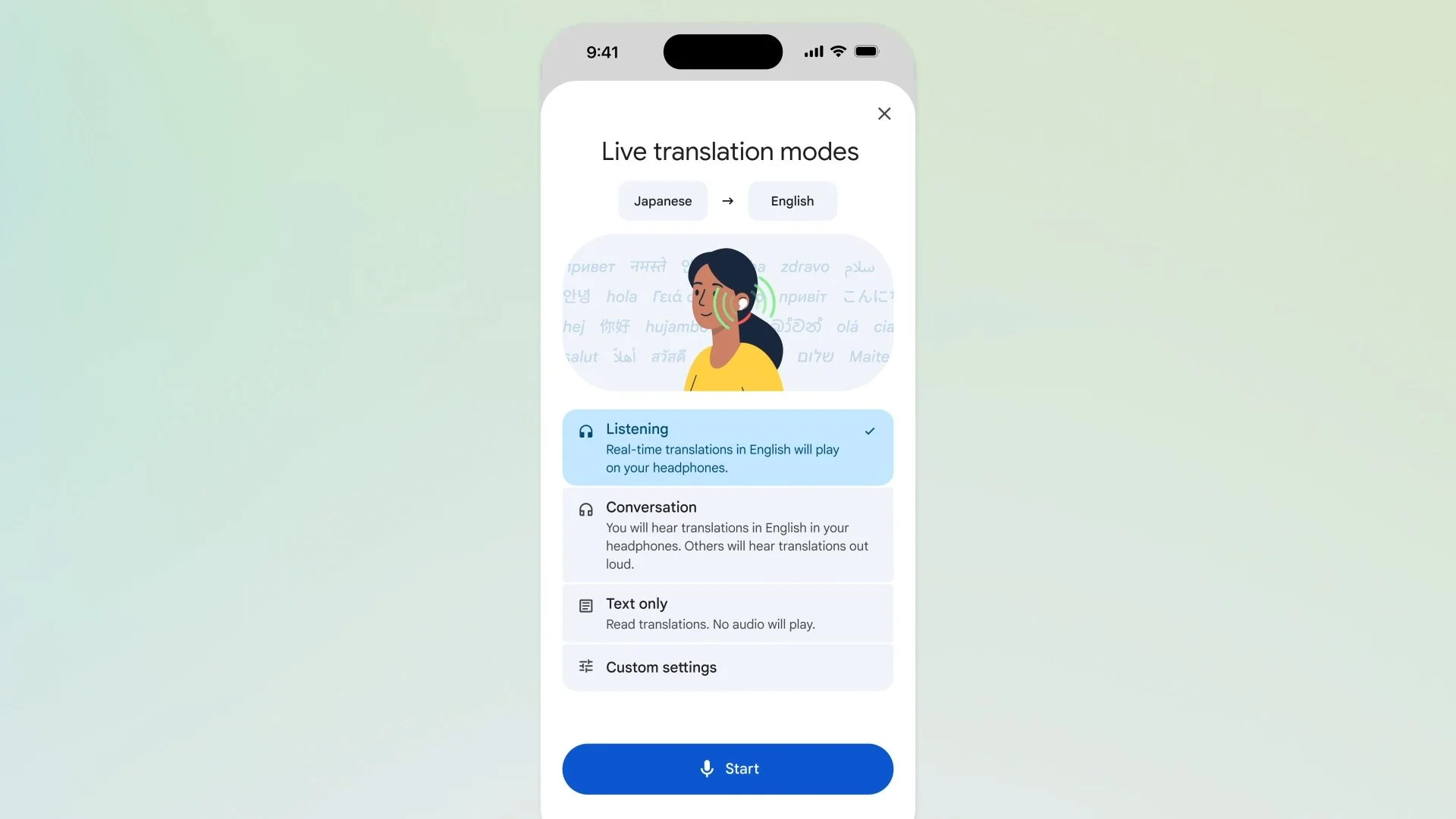

Google has extended one of its most sophisticated AI translation capabilities to iPhone users. The Live Translate with headphones feature is currently deploying across iOS devices, arriving several months after launching on Android in December.

This capability transforms standard headphones into simultaneous interpretation devices, enabling users to comprehend multilingual conversations in real time without constantly checking their screens.

The live interpretation functionality supports over 70 languages and is becoming available in additional markets, including France, Germany, Italy, Japan, Spain, Thailand, and the United Kingdom.

Transforming audio peripherals into simultaneous interpreters

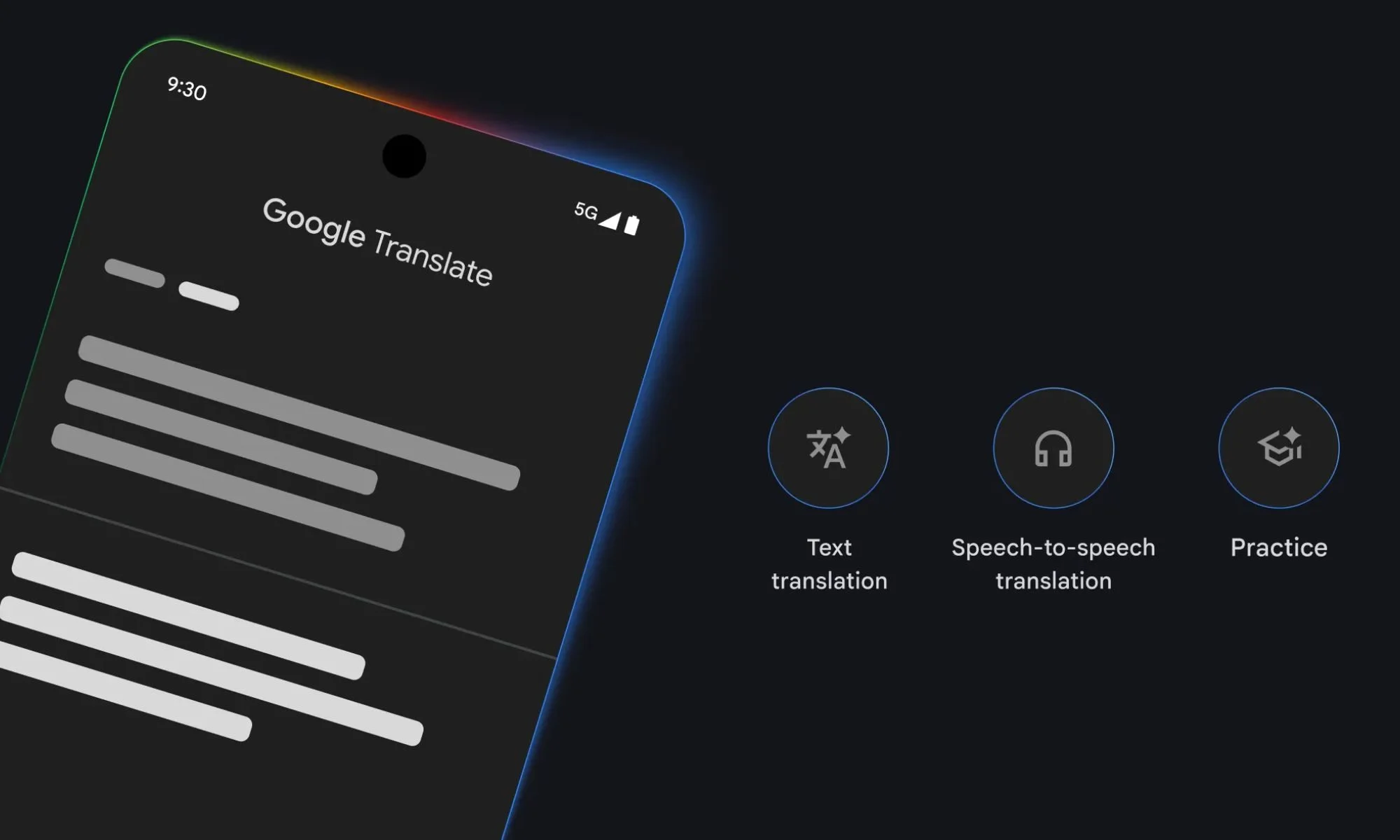

The live interpretation system operates natively within the Google Translate application. After pairing compatible headphones, the software processes incoming speech and streams translated audio directly to the user's ears.

The technology extends beyond simple lexical conversion. According to Google, the system maintains vocal characteristics and rhythm, allowing users to grasp conversational subtleties more effectively.

This advancement aligns with Google's strategic integration of Gemini-powered translation capabilities, which prioritize semantic understanding over literal transcription. The approach enables more accurate handling of colloquialisms, idiomatic expressions, and conversational language patterns.

Enabling seamless cross-language communication

The functionality targets practical, everyday scenarios. Users can leverage it to participate in conversations with multilingual family members or friends, comprehend public announcements during international travel, or engage with local speakers for recommendations while preserving contextual meaning.

Implementation requires minimal setup. Users simply launch the Translate application, select the Live Translate option, and pair their headphones. The system then processes and delivers translations automatically as conversations unfold.

Google continues expanding Translate's capabilities through parallel initiatives, including user-selectable translation modes balancing speed versus precision and experimental features like a pronunciation coaching tool for accent refinement.